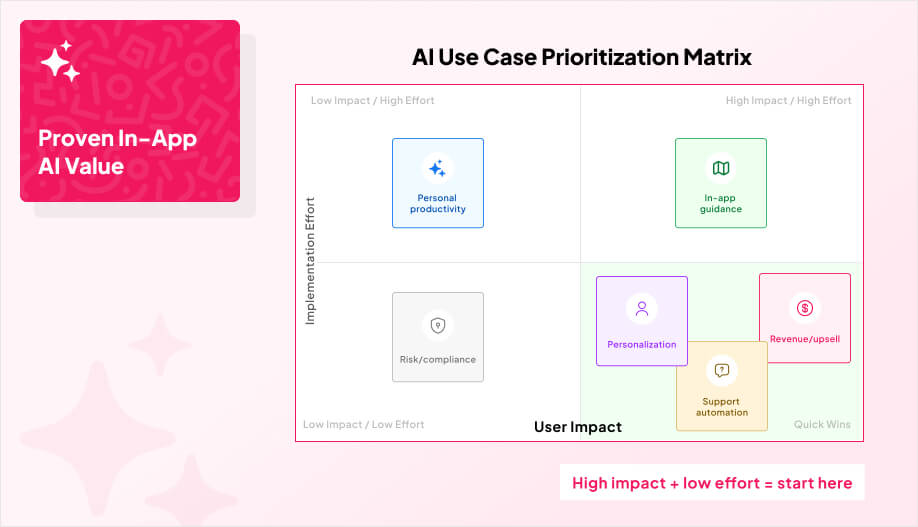

Productivity features save time. Customer experience features save the relationship.

If a user hits confusion, friction, or uncertainty inside your app, they don’t go open a help center and calmly research. They bounce, or they churn later.

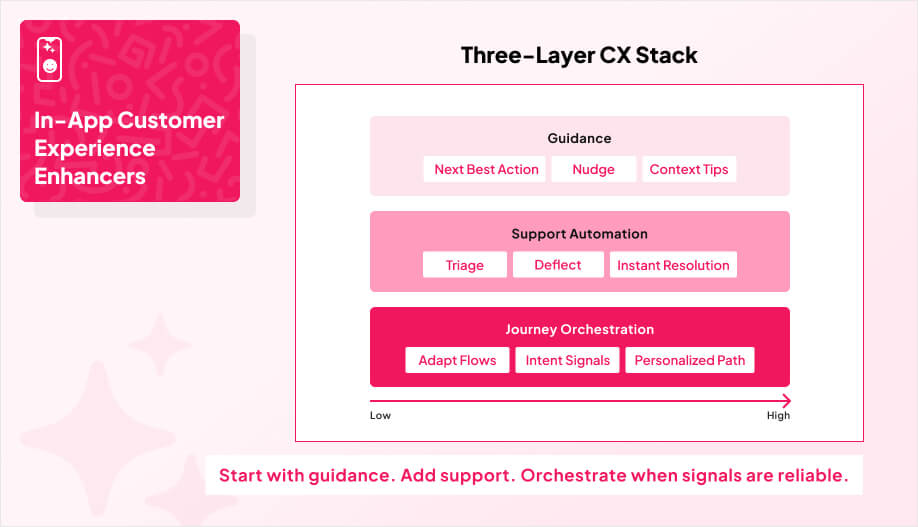

Many teams see generative AI as a way to make digital interactions feel more human, and users now expect faster, more personalized experiences than most products can deliver with static UI alone. Which is why real-time support automation is the next layer because it is shown to deliver a 30% cost reduction in customer service operations. Instead of pushing users out to email or a ticket form, you give them contextual help right on the screen where they got stuck, and the doc ties this to measurable cost reductions in customer service operations.

We’ll break that down into three concrete pieces in the following sections below.

AI Guidance That Keeps Users Moving

AI-powered in-app guidance is what you add when you’re tired of watching users hit a confusing screen, hesitate, then disappear. Instead of forcing people to dig through FAQs or bounce into support, guidance steps in right where they are with the next best action. That can help users finish what they started, and it also takes pressure off internal teams by handling routine “what do I do here?” moments automatically.

The reason this works is because you’re shaping behavior in real time, not dumping more help content into a corner no one visits. At the same time, the global AI market is pegged around $391B, and 70% of CX leaders plan to integrate generative AI by 2026. This means that users are getting trained to expect smarter experiences, and basic “static UI + help docs” is falling behind.

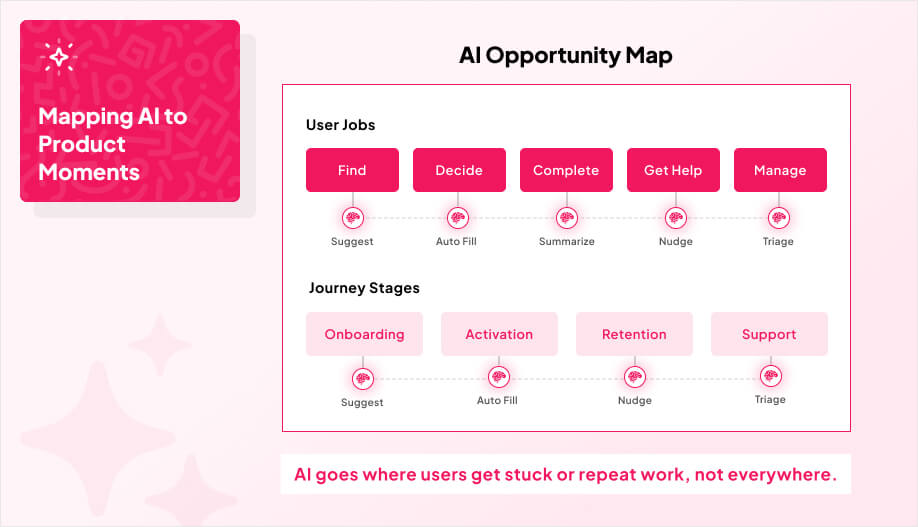

Practically, guidance layers usually do three jobs well:

- Personalize workflows using real-time intent signals and past behavior

- Suggest content, layouts, or products the way Canva-style tools already guide users

- Nudge completion when someone stalls on a key path (setup, configuration, checkout)

We typically embed it in our onboarding, complex configuration screens, and any high-stakes checkout flow where confusion equals lost revenue.

Once guidance is working, the next layer is to solve the problem in the moment with real-time support automation.

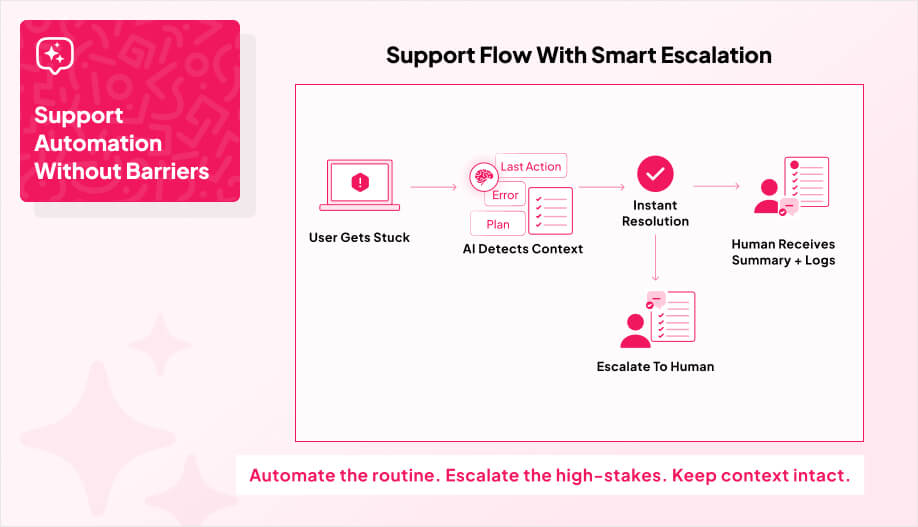

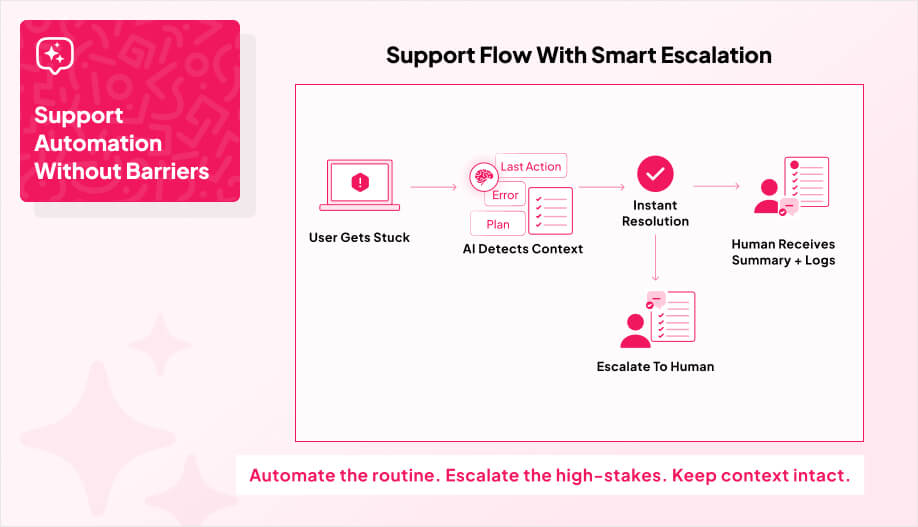

Support Automation Without The “Bot Wall” Problem

Real-time support automation is what happens when your app stops dumping users into a “contact us” dead end and starts acting like a first-line support channel.

The goal is not to “add a chatbot.” It’s to solve routine issues in the moment, route the messy stuff to a human, and keep people moving through the product instead of bouncing.

When AI agents are embedded directly in the flow, you can resolve a big chunk of requests without human help and cut response times by around 30%, and shrink the time spent on complex cases by 50%+. It also flags the direction the market is going: by 2027, AI is projected to resolve 50% of service cases.

That’s not a reason to automate everything. It’s a reason to stop treating support as an “outside the app” problem.

The best versions of this don’t feel like a support tool bolted on after the fact. They feel like the product knows what the user is doing, understands the last few steps, and can either fix the issue or hand off with context. That’s also how you protect margins layer to meaningful cost reduction in customer service operations, while still giving users a 24/7 path to answers.

Here at AppMakers USA, when we design these systems, we obsess over two things: (1) clean escalation paths so users can reach a human when it matters, and (2) tone and interaction quality so the support experience feels helpful, not robotic.

Once support can actually solve problems in real time, the next step is using those same signals to shape the experience itself which leads into personalized journey orchestration.

Personalized Journeys That Adapt In Real Time

Most apps track clicks and page views. That’s not the same as orchestrating an experience.

Orchestration means your app reacts to what a user is doing right now and adjusts the path in-session. If someone is high-intent, you stop slowing them down and move them into a faster, conversion-focused flow. If they’re unsure, you give them more context and help before you push an offer. That’s the whole idea.

This matters because expectations have changed. 73% of customers expect personalized interactions, and CX leaders attribute up to 25% profit lifts to better experiences. The market is also not waiting. 95% of companies planned to implement AI-powered customer service by 2025, which means “always-on, personalized guidance” stops being a differentiator and starts being table stakes.

The implementation is less mystical than people make it:

- Unify the profile across web, app, and even store visits if that applies.

- Use AI for “same-session re-decisioning,” so flows, offers, and even support adapt as intent shifts.

- Pair behavioral signals with voice-of-customer signals to surface friction fast (platforms like Quantum Metric as an example), and keep latency low so personalization feels instant.

We treat orchestration as a system, not a feature where real-time signals replace static campaigns, analytics expose friction, and predictive nudges (push, in-app, email) are tied to conversion and retention, not “engagement” vanity metrics.